The empirical shift in economics

Rather than being unified by the application of the common behavioral model of the rational agent, economists increasingly recognize themselves in the

What’s at stake: Rather than being unified by the application of the common behavioral model of the rational agent, economists increasingly recognize themselves in the careful application of a common empirical toolkit used to tease out causal relationships, creating a premium for papers that mix a clever identification strategy with access to new data.

Economics imperialism in methods

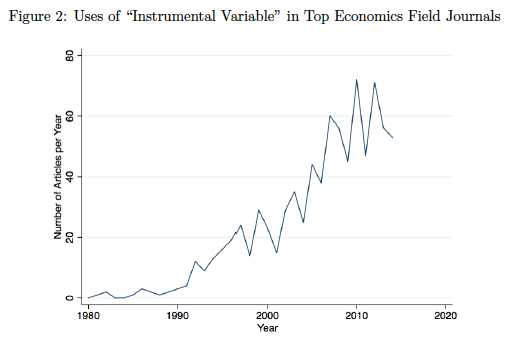

Noah Smith writes that the ground has fundamentally shifted in economics – so much that the whole notion of what "economics" means is undergoing a dramatic change. In the mid-20th century, economics changed from a literary to a mathematical discipline. Now it might be changing from a deductive, philosophical field to an inductive, scientific field. The intricacies of how we imagine the world must work are taking a backseat to the evidence about what is actually happening in the world. Matthew Panhans and John Singleton write that while historians of economics have noted the transition in the character of economic research since the 1970s toward applications, less understood is the shift toward quasi-experimental work.

Matthew Panhans and John Singleton write that the missionary's Bible is less Mas-Colell and more Mostly Harmless Econometrics. In 1984, George Stigler pondered the “imperialism" of economics. The key evangelists named by Stigler in each mission field, from Ronald Coase and Richard Posner (law) to Robert Fogel (history), Becker (sociology), and James Buchanan (politics), bore University of Chicago connections. Despite the diverse subject matters, what unified the work for Stigler was the application of a common behavioral model. In other words, what made the analyses “economic" was the postulate of rational pursuit of goals. But rather than the application of a behavioral model of purposive goal-seeking, “economic" analysis is increasingly the empirical investigation of causal effects for which the quasi-experimental toolkit is essential.

what made past analyses “economic" was the postulate of rational pursuit of goals.

Nicola Fuchs-Schuendeln and Tarek Alexander Hassan writes that, even in macroeconomics, a growing literature relies on natural experiments to establish causal effects. The “natural” in natural experiments indicates that a researcher did not consciously design the episode to be analyzed, but researchers can nevertheless use it to learn about causal relationships. Whereas the main task of a researcher carrying out a laboratory or field experiment lies in designing it in a way that allows causal inference, the main task of a researcher analyzing a natural experiment lies in arguing that in fact the historical episode under consideration resembles an experiment. To show that the episode under consideration resembles an experiment, identifying valid treatment and control groups, that is, arguing that the treatment is in fact randomly assigned, is crucial.

Source: Nicola Fuchs-Schuendeln and Tarek Alexander Hassan

Data collection, clever identification and trendy topics

Daniel S. Hamermesh writes that top journals are publishing many fewer papers that represent pure theory, regardless of subfield, somewhat less empirical work based on publicly available data sets, and many more empirical studies based on data collected by the author(s) or on laboratory or field experiments. The methodological innovations that have captivated the major journals in the past two decades – experimentation, and obtaining one’s own unusual data to examine causal effects – are unlikely to be any more permanent than was the profession’s fascination with variants of micro theory, growth theory, and publicly avail-able data in the 1960s and 1970s.

Barry Eichengreen writes that, as recently as a couple of decades ago, empirical analysis was informed by relatively small and limited data sets. While older members of the economics establishment continue to debate the merits of competing analytical frameworks, younger economists are bringing to bear important new evidence about how the economy operates. A first approach relies on big data. A second approach relies on new data. Economists are using automated information-retrieval routines, or “bots,” to scrape bits of novel information about economic decisions from the World Wide Web. A third approach employs historical evidence. Working in dusty archives has become easier with the advent of digital photography, mechanical character recognition, and remote data-entry services.

Tyler Cowen writes that top plaudits are won by quality empirical work, but lots of people have good skills. Today, there is thus a premium on a mix of clever ideas — often identification strategies — and access to quality data. Over time, let’s say that data become less scarce, as arguably has been the case in the field of history. Lots of economics researchers might also eventually have access to “Big Data.” Clever identification strategies won’t disappear, but they might become more commonplace. We would then still need a standard for elevating some work as more important or higher quality than other work. Popularity of topic could play an increasingly large role over time, and that is how economics might become more trendy.

Noah Smith (HT Chris Blattman) writes that the biggest winners from this paradigm shift are the public and policymakers as the results of these experiments are often easy enough for them to understand and use. Women in economics also win from this shift towards empirical economics. When theory doesn’t rely on data for confirmation, it often becomes a bullying/shouting contest where women are often disadvantaged. But with quasi-experiments, they can use reality to smack down bullies, as in the sciences. Beyond orthodox theory, another loser from this paradigm shift is heterodox thinking as it is much more theory-dominated than the mainstream and it wasn't heterodox theory that eclipsed neoclassical theory. It was empirics.

Heterodox economic theory didn't eclipse neoclassical economic theory. It was empirics.